January 2007 - Posts

Molly E. Holzschlag, formerly of the Web Standards Project (WASP) has joined the IE team on a contract basis to work on standards and interoperability issues.

"I’m very excited to announce that I will be working to advance standards and interoperability education and outreach. The goal is essentially two fold:

- To provide resources to Web designers and developers (including internal developers at Microsoft) as they work toward a more standards-oriented goal - no matter which tools and technologies are being used. [....] We’ll also be doing hands-on tutorials, continuing with our chat series, and I’ll be blogging a column called “The Daily Molly” which will provide short news, tips and tricks, and items of interest to the community

- To work with Microsoft as well as all browser and tools vendors. It is my desire that persistence coupled with diplomacy will assist us all in moving to a time where interoperability becomes the heart of the Web again"

Pete LePage, Product Manager for IE has shared his thoughts on the role Molly will be taking on while on the team:

"When Molly and I first started talking about what her role would entail, I explained to her I wanted to hire Molly, not "Microsoft Molly", but Molly. She isn't going out and pimping things, and she's not going to be telling you things she doesn't believe in, she still be out working to make the web a happier place for all web designers and developers to be."

There's a good working history between Molly (via WASP) and Microsoft. So anything that can be done to get more focus on the standards work required for IE is great news for users, web designers, developers and especially testers....

Good luck Molly!

In case you haven't heard, Jim Gray is still missing.

Our thoughts are with Jim and his family. I work in the SQL building and obviously everyone here is hoping to hear some good news very soon. We're all wishing for the best and that Jim turns up OK.

Last night's Daily Show, with Jon Stewart interviewing Bill Gates about the Vista launch was fun.

Jon: What's your password?

Bill: <laughs>

Jon: You don't have to answer that. Is it 'Gates'?

Bill: I'll tell you later.

Jon: Hey, do you have pets?

Bill: Well actually, we keep putting off having pets. Our kids put on a lot of pressure.

Jon: Did you ever have a pet when you were younger?

Bill: Sure I had a dog.

Jon: What was the pet's name?

Bill: That's not my password.

You watch the interviews on Soapbox beta: Part 1 and Part II

Ever since I heard of the UK government's plans for an ID card system, I've felt for a number of reasons that it wouldn't work and that there would be 'unintended consequences', including making it easier for cybercriminals to commit large-scale fraud.

My primary concern has been the ability for the government to execute on their plans - let's face it, the UK government's track record in large scale IT projects is comparable to Eddie the Eagle's efforts in the Olympics (a British ski jumper who managed 56th position out of 57 competitors. The 57th was disqualified).

Since the original proposals, it seems that the UK government itself is realising the scale of this project is too ambitious and likely to fail - hence the recent scoping down. This is one of a number of 'risk management' tactics we'll read about as reality hits <the> home <office>. By the time they do eventually roll it out, it will look nothing like the original plan, will cost a load more than 'guessed' and will arrive years later than expected. And then they'll scrap it. And then they'll re-try it. Of course, I won't say I told you so.

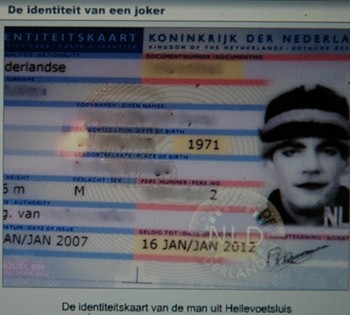

One line of argument made by the pro-ID cards faction (er, the UK government) is to point out how well the ID programs are working overseas and how they are reducing benefit fraud increasing the security for all. Hmmm...I wonder what they might make of this Dutch joker:

"A man from Hellevoetsluis has managed to get an official ID card with a photo of himself made up to look like the Joker, from the Batman films. The man managed to get round strict new rules governing photos for ID cards and passports by saying the make-up and hat were part of his religious beliefs. The council gave the go ahead."

Good news for XQuery and XSLT fans - the W3C has announced the W3C Recommendations for XQuery 1.0 as an XML-aware syntax for querying collections of structured and semi-structured data both locally and over the Web and XSLT Version 2.0, a specification for transforming data model instances (XML and non-XML) into other documents. The third spec that's made Recommendation status is XPath 2.0, an expression syntax for referring to parts of XML documents.

Some of the big players behind getting these standards ratified (IBM, Oracle, Microsoft) are quoted on this testimonials page.

From the official press release:

"These new Web Standards will play a significant role in enterprise computing by connecting databases with the Web. XQuery allows data mining of everything from memos and Web service messages to multi-terabyte relational databases. XSLT 2.0 adds significant new functionality to the already widely deployed XSLT 1.0, which enables the transformation and styled presentation of XML documents. Both specifications rely on XPath 2.0, also significantly enriched from its previous version.

..."This is a red-letter day for XSLT users," said Michael Kay, editor of the XSLT 2.0 specification, "both for those who have been waiting patiently for this Recommendation to appear before they could use the new features, and for those who have taken a gamble by deploying the new technology before its final stamp of approval. Our biggest achievement, in my view, has been to deliver a huge step forward in functionality and developer productivity, while also retaining a very high level of backwards compatibility, thereby keeping transition costs to the minimum."

But not everyone agrees XQuery is slam dunk. Ex Microsoftee 'Derek' in this November post on hearing the news of the proposed recommendations, wrote at the time:

"I just noticed, XSLT 2.0, XML Query and XPath 2.0 Are Proposed Recommendations. Since leaving MS, I've stopped tracking these. It baffles me that XQuery is only just now 'shipping'. This + the-mess-that-is-XSD spell the doom of XML. This isn't just doom, like the Outlook spell-checker would try an correct the DOM to; this is real doom. These standards to too complicated and too late

...I honestly think we would be better without XQuery. Let the vendors think for themselves and see what customers actually use. XQuery is a standard looking for a use, which is backward and guaranteed to produce a problematic result.

XSLT/XPath 2.0 is a harder one... There are a couple things that XSLT 2.0 adds that were desperately needed vs XSLT 1.0. But I've managed a team implementing a commercial quality XSLT 1.0 implementation and that was a huge amount of work. XSLT 2.0 is at least 4x as much work. That is terrifying. Why not just 'fix' XSLT 1.0? It would be dramatically less work, and provide 80% of the gains, at 10% the cost."

Generally however, the reaction I've seen to the news seems fairly positive. Kimbro Staken never thought he'd see the day. Jonathan Robbie of DataDirect seems chuffed (mind you, his cmapny has been a member of XML Query WG). Alex Miller seems happy too (he's at BEA).

Bob Beauchemin, SQL Server MVP is speculating on the impact this news will have on the Microsoft SQL Server future product line:

"Although SQL Server 2005's XML data type doesn't exactly follow the XQuery 1.0/XPath 2.0 Data Model, rumor has it that the next version of the ISO/ANSI SQL spec (SQL2007?) may have some something to say about this, as well as something to say about XQuery in general. Right now, the SQL2003 spec doesn't specify a query language. [also a view shared by Matija Lah]

It will also be interesting to see what the SQL Server folks do with regards to updates to support the new specs in the next release, and support of a larger portion of the language constructs."

Given that Michael Rys (who also posted on this news) is a member of the SQL Server product team and has been closely involved in the relevant standards Working Groups, I think Bob's speculation may be justified... :-)

Apologies in advance. I'm attempting to couple loosely related threads here...I have no conclusion.

Leigh Dobbs originally published Connecting Social Content Services using FOAF, RDF and REST for XTech 2005, where he analysed a sample of eight APIs (including the usual suspects - del.icio.us, Last.fm and Upcoming).

Dobbs speculated as to why those services lacked 'hypermedia' support.

Hypermedia: links and pointers to other resouces that are included in a service response to allow further discovery and interaction of those other resources. Or, to paraphrase Danny Ayers, 'Hypermedia should be hyperdata and Hyperdata is 'the Semanitc Web'.

Back to Dobbs (my bold):

"Lack of linking can be attributed to three factors. Firstly, discussion of the REST style have, to date, been largely centred on correct use of HTTP rather than the additional benefits that acrue from use of hypermedia. Secondly, the RPC style that the majority of the services follow, promotes a view of the API as a series of method calls, rather than endpoints within an hypertext of data. Thirdly, the use of API keys prohibits free publishing of links, as given URL is only suitable for use by a single application, the one to which the key was assigned."

Mike Dierken (a 'Senior Troublemaker') last week provided another reason why the desired 'hypermedia' (or 'hyperdata') support might be missing from those services analysed by Dobbs...again, my bold:

"This review notes that nearly all services do not use hypermedia which I think is unfortunate but understandable. I've always had a problem resolving the desire to be flexible in allowing the internal data identifiers to be used in many situations and the desire to be trivally easy for clients to access other resources by simply using links - the mashup problem. One issue I have is that the server-side software that generates the representation might not know all the possible resources made available by sibling services. Think of a US postal zip-code - if you have a service that provides weather based on zip code, should that representation also be responsible for linking to all other services - either provided by your system or some other server - that could potentially take in a zip-code? My approach is to return both direct links to known resources (tagged appropriately) as well as the short-form of the identifier, the plain zip-code for example. Microformats sort of do this, but it's a style that isn't well applied by data services."

I'll give Danny the final word (for this post at least ;-) on Hyperdata:

"I'm still convinced that working at the syntax/grammar level without any common data model/language (i.e. semantics) is fundamentally flawed when it comes to global interop. On the web interop starts with URIs for concepts & resources and their relations, not angle brackets. [PS. reworded - amounts to the same, but sounds less abstract]"

More <loosely> related reading:

Scott Guthrie has announced the final release of ASP.NET AJAX 1.0 (aka "Atlas").

Other related links worth checking out:

Frank has convinced me...Starbucks is everywhere.

The other day I drove up to one of the drive-thu Starbucks, ordered my usual grande latte, paid for it, left a tip and drove off.

About a mile later I turned around to actually get the coffee.

On my recent trip to London I got snagged by the 'intergrated' region encoding 'feature' on my laptop DVD drive. Let me explain:

I bought 2 DVDs in the UK which the DVD drive barfed at. No error message, warning or anything, it just didn't work. So I looked up the Matshita DVD driver (UJ-840S ATA Device) in 'Device Manager' and the general status tab told me 'This device is working properly.' Really, I thought to myself.

So clicked on the DVD Regions tab of the Properties dialogue box. It said:

"Most DVDs are encoded for play in specific regions. To play a regionalized DVD on your computer, you must set your DVD drive to play discs from that region by selecting a geographic area from the following list.

CAUTION You can change the region a limited number of times. After Changes remaining reaches zero, you cannot change the region even if you reinstall Windows or move your DVD drive to a different computer.

Changes remaining: 4

To change the current region, select a geographic area, and then click OK.

You what?? No, it's not OK. I want to watch 'genuine' DVDs that I acquired legitimately. I bought them, in a shop!

I live in the US, and I travel. I don't know about you, or the millions of other people who travel each year, but I buy stuff when I'm traveling and want to 'consume' these things wherever I happen to be - that includes food, clothes, music and yes, movies. Is that so wrong??

I don't see regional encoding warnings on the back of a t-shirt. Imagine: "You may only wear this t-shirt within the country you bought it".

I don't expect to see a message on the back of my sandwich cautioning me that "You may not eat this product outside of the UK, although the mustard came from the US, so if you are thinking of eating the sandwich there you can eat the mustard, but nothing else. Especially the lettuce. Oh, and you can only eat the mustard 4 times if you are so inclined".

I can listen to CDs anywhere, no matter where I bought them. So what's up with this DVD thing?

I admit, it didn't totally surprise me as I've previously (and knowingly) bought multi-region DVD players to play region encoded movies bought on my travels. However, I was surprised to find that PC DVD drives were subject to the same insanity. I didn't realise this.

A history of DVD copy protection' outlines the DVD Consortium's role in this debacle:

"DVDs have had the option of embedding a region code in the DVD disc. Different regions of the world were assigned different codes, and every DVD player manufacturer had to sign an agreement with the DVD Consortium stating that they would only play DVD discs of a certain region. And the manufacturers had to play by the Consortium’s rules, because the Consortium was the only one who could give out the decryption keys that would allow the player to decrypt the CSS encryption used on commercial DVDs."

What I really don't understand is the sense of the policy. I understand what the DVD Consortium is trying to do, but does this policy make sense to the law-abiding 'consumer'?

The policy leaves me with the following options as a 'consumer' who wants to watch DVDs on my laptop AND abide by the DVD Consortium's policy:

- Change to region encoding only four times during the lifespan of the DVD drive (eh, right)

- Buy DVDs from other countries but don't watch them (eh, right)

- Don't buy DVDs from other countries (eh, right)

- Buy multiple DVD drives (to cover every region). Carry these drives around with me while I travel and swap in and out of my laptop (eh, we've truly landed in Willie Wonka's fantasy chocolate factory)

Over the years, the encryption tech has gone through multiple updates due to the (successful) efforts to work around the limitations, including region encoding. So, does one become a 'naughty' consumer? What alternatives to the above are there for the sane? How about:

- Get some DVD ripping software and buy DVDs from which ever country I happen to be at and watch them whenever and wherever I want

- Er, that's all.

Gee, let me think...which should I go for?

Tim O'Reilly has posted the second part of the Q406 computer books sales report, comparing Q4 2006 with Q4 2005. This is for top selling computer-related books sales in the US, not just O'Reilly titles. Always interesting as an indicator of trends.

Here the highlights for me...The following compares Q4 2006 to Q4 2005:

- Overall 'computer' book sales up 4%

- Databases category up 6% (I can't see the detailed breakdown in the enterprise db space other than SQL Server is up and Oracle is down - hope Tim provides an update on this later)

- Programming languages: Java down 14%, '.NET languages' up 34%, Ruby up 53%, Python up 37%, Perl down 23%,

- Web design and development category up 7%

- Ajax up 55%, Rails up 43%

- Business apps category down 8%: 'crm general' up 256%, collaboration down 23%, Sharepoint down 24% (Sharepoint Server 2007 coming)

- Windows XP down 33% (Vista effect I suspect...)

Don Ferguson, IBM Software Group's former Chief Architect, has appeared on the Microsoft.com site with his own Executive bio. According to the bio page Ferguson is now

"Microsoft Technical Fellow in Platforms and Strategy, in the Office of the CTO

...Some of the public focus areas [at IBM] were Web services, patterns, Web 2.0 and business driven development. Don guided IBM’s strategy and architecture for SOA and Web services, and co-authored many of the initial Web service specifications."

'Office of the CTO' - that's Ray Ozzie's team.

His old IBM blog is still up, but hasn't been updated since September 22. I've not come across his blog before, but I thought this entry from last August was of interest:

"Software in the Next Five Years

A customer asked me to present my view of software in five years. I was very flattered and tried my best. If anyone is interested in speaking with me about my conclusions, drop me an email. Once I get a little more feedback, I will make the presentation and paper available. To pique interest, my top ten conclusions are:

- Software appliances and SW configurations integrated with virtual middleware

- Situational applications and end-user Web programming

- An enterprise SW architecture that includes open source, good enough middleware and products from IBM and other companies

- SOA and business policy/rules

- Composite Applications and Business Services

- SW evolving to exploit next generation HW, e.g. multi-core and intelligent network storage.

- SOA and EDA

- A web approach to data and storage

- Recipes, Patterns and Templates

- Web 2.0"

Congratulations Don!

Via InfoQ.

Quick backgrounder: In October of last year, Tim Berners-Lee called for a renewed effort to progress the HTML standard from its current version (HTML 4.01) ratified by the W3C eight years ago. More recently, the WHAT-WG, an independent group was established, emerging out of the frustration with the lack of progress made by the W3C. Since October, some progress has been made by the WC3 in that they are chartering a new HTML Working Group and have appointed a new chair - Chris Wilson, Program Manager on the IE team at Microsoft.

In December, Daniel Glazman expressed his concerns with the new HTML WG, including those regarding the appointment of Chris as the new chair of the W3C's HTML WG - the main thrust of his argument being that having a Microsoft employee and a major browser vendor in this key position would encourage poor feedback from the press and the community:

"If I do trust entirely the individual Chris Wilson for the chair of this Working Group, it's with deep concern I see a major browser vendor take the chair of the most visible WG in the Consortium. From my point of view, desktop browser vendors should be banned from the chair of that Group, to avoid (a) bad feedback from the press and the community (b) avoid issues between the WG and the chairman's parent company about the directions taken by the Group. I know Microsoft already had W3C chairs in the past - or even present - but the HTML WG is different in its very high visibility, and I certainly fear the "Microsoft puts its hand on HTML" press articles we're going to face."

Today, Chris Wilson has posted his response to Daniel Glazman's concerns.

I've been out of the tech news loop in the last month and so completely missed that fact that Chris was made chair of the new group, but I think his appointment is very good news for the future of HTML and for the web as a whole. Now, you could say "well, you work for Microsoft, so you would say that". That's hard to argue with. But let me know why I think it's a good thing.

As TBL pointed out, the progress of the official HTML spec has been in a mire for nearly a decade and it's no secret that up to very recently, Microsoft's implementation in IE of web standards has been nothing short of appalling. Since the development of IE7, things have improved in this respect, but they aren't perfect.

Having the Microsoft IE team align with web standards and rid web developers and designers of the overhead that comes with having to design for a broken rendering environment has been wished for by many for years. Microsoft's past distance and lack of engagement in the web standards process was hurting the web: its the users, the developers and owners of websites. The overhead was real. Prior to joining Microsoft I worked at a web development and design agency for seven years and know too well the grief this caused to our team. Cussing Microsoft was literally part of the daily routine as testers reported 'bugs' that weren't actually bugs.

It is why Firefox has done so well - the community's response has been to 'take back the web', a concept that truly resonated with the tech community. Through Firefox, Microsoft has realized that conforming to web standards has to be a priority and not an afterthought. Recent efforts such as Microsoft working with WASP on web standards are steps in the right direction, as has IE7's progress with CSS. But these recent efforts have been about making things work on current specs and standards. We also need to look ahead, and that future needs to have Microsoft's involvement. Personally, I'm not worried about the concerns Daniel has around the media's response to the fact that a Microsoft employee is chair of HTML WG - frankly, WC3's reputation relating the the HTML spec couldn't be any worse than it is today. The only way is up.

The anti-microsoft crowd are anti for a number of reasons and one legitimate reason has been Microsoft's historical disrespect of web standards. As I see it, by having Chris in this position Microsoft is making the biggest commitment it can to respecting web standards in the future. That has to be a good thing for all of us.

Finally back to work after a short break that included a visit to London and Antwerp.

In many ways, London is still its good old self and I always enjoy coming back for a quick visit to see family and friends and immerse myself in the vibrancy and creative energy of the city. However, there are many things about this last trip that reminded me of why I was happy to leave London over two years ago.

For one thing, the prices and cost of living in London are ridiculous. They always have been, but the reminder was a shock. A one way tube ride is now £3 ($6), regardless of the zone you travel from and to. A vodka martini in a so-so London bar is £7.50 ($16). A three mile cab ride will rip you of £15 ($30) with a driver that seems aggrieved with the fact that he is driving you somewhere, and so on. That's the everyday stuff (well, maybe not the martini). The property-prices-to-square-footage-ratio is getting worse too.

It's a dirty city (dirtier than I remember), with overly aggressive commuters and bastard parking meter attendants. The attitude (as well as the tube) stinks. To get into a tube car you need a martial arts black-belt and a cold heart. No-one says 'excuse me' when they shoulder you. No one makes room or has consideration for others while on their travels be it on foot or by car. Pushing and elbowing your way through the bustle is standard operating procedure, as everyone seems to be in apparent drastic rush to get where they are going to. The sad thing is, you end up having behave the same to get from A to B or you'll never get there, not in one piece at least. After living there for 27 years, the instincts that I thought I had rid myself of over the last two years quickly kicked in again. When in Rome, and all that, but I don't like my aggressive side.

I wish I could say something nice about London to finish off this post, but I'm finding it hard. Oh yeah, here's one - London: crap to live in, but good for quick visits.

More Posts